MARCH 31, 2026

A Practical Guide to Evaluating Wireless Condition Monitoring Systems

DAN BRADLEY, SENIOR EXECUTIVE @ PETASENSE

Framing the Evaluation

The identification and selection of a condition monitoring technology provider can make or break a program. Over the years, we have been involved in hundreds of evaluations, initially focused on portable data collector systems, and more recently on wireless solutions.

Organizations take a wide range of approaches, from “buy from who you know,” to running pilots, to implementing a structured, team-based evaluation process. There is no one-size-fits-all approach, as each organization’s needs vary based on industry, program maturity, available resources, and desired outcomes.

Based on our experience, the following considerations consistently lead to more successful evaluations. To support this process, we have also developed an evaluation matrix template that can be adapted to your specific requirements. It is provided at no cost with the goal of helping you make a more informed and confident decision.

Why have a formal process?

There is an old saying, “If you don’t know where you’re going, any direction will get you there.” Approaching an evaluation without clearly defined objectives often results in key challenges being overlooked or insufficiently addressed.

The two most common pitfalls we see are failing to involve the right stakeholders and not clearly defining what the program is intended to achieve. While a structured evaluation process cannot solve every challenge, it goes a long way in aligning stakeholders and establishing a shared understanding of priorities.

A formal, written evaluation approach that attempts to quantify key criteria helps reduce personal bias and ensures a more balanced assessment across the team. As a result, the final decision is more defensible and more likely to address the primary goals and concerns of the program.

Components of a successful evaluation

While it is not possible to consider every aspect here, our experience has shown that the most successful evaluations include the following:

• Stated project goals including (Critical Success Factors, or CSF). What problems are you trying to solve? These should be discussed and written down. The outcome of this exercise helps determine what criteria to include in the evaluation and how to weight them (see Evaluation Spreadsheet Tool).

• Defined budget and expected return. Establishing a realistic budget early helps narrow the scope of the evaluation and ensures alignment across stakeholders. This should include not only upfront hardware costs, but also ongoing software subscriptions, installation, and internal resource requirements. At the same time, teams should outline the expected return, whether through reduced downtime, maintenance cost savings, or risk mitigation, to ensure the investment is justified.

• Identify who should participate in the evaluation (i.e. stakeholders). Commonly this includes Maintenance, Engineering/Reliability, IT (Digital Office), Operations, Production and Procurement. Note: they may not all have an equal say, which should be discussed.

• How the evaluation will proceed. For example, this may include the duration of the evaluation, number of vendors to evaluate, whether pilots will be run at this stage, and if multiple rounds of evaluation will be required.

• Identify key contact points for both the company team as well as the identified vendors. Try and keep this consistent throughout the evaluation. Clarifying questions should be addressed as they come up. It is professional to communicate the status/stage of the evaluation along the way as well.

Lessons learned

As stated earlier, it is not possible, nor desirable, to have a “one size fits all” approach. However, we can share some of the key areas where we have identified critical items for consideration. Sharing these may assist you in identifying the most important criteria for your evaluation.

The Company

Broadly speaking, this has to do with the maturity, stability, technical, and commercial approach of the vendor. Key considerations may include:

• Product maturity. What generation is the product on? Has it evolved over time based on real-world deployments?

• Company stability. Has the company been around long enough to demonstrate staying power and the ability to support itself financially? With 100+ vendors now offering wireless solutions globally, many of which are startups, it is reasonable to assume not all will last.

• Technical capability and expertise. Does the vendor offer the internal knowledge and tools required to support how you intend to run your program?

• Range of applications supported. Are they focused solely on vibration monitoring for rotating equipment, or do they support a broader set of use cases such as multi-parameter monitoring for non-rotating assets as well? This becomes increasingly important as programs scale beyond initial use cases.

• Commercial model. Who owns the data? Is the offering strictly subscription-based, or is there flexibility to purchase the technology outright?

Application Expertise

The internet is full of examples showing the improper application of wireless condition monitoring technologies. Many of these issues stem from how sensors are deployed and how AI/ML capabilities are positioned. While it is not possible to capture every potential challenge, some of the most common considerations include:

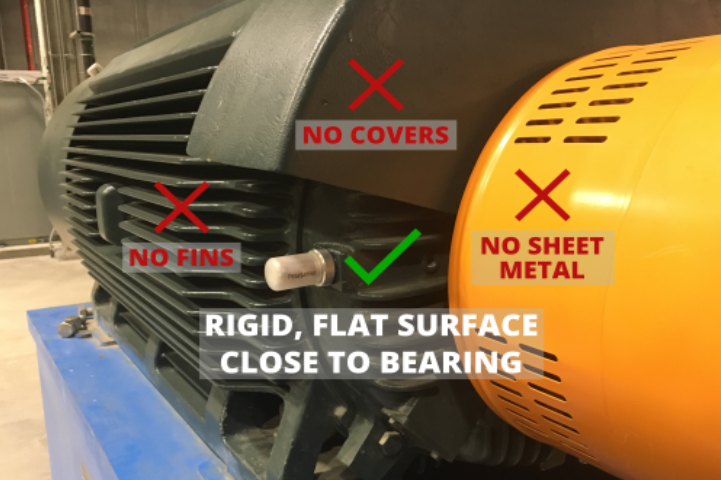

• Sensor selection and installation. The choice of sensor (type, performance, ratings), along with proper installation, is one of the most critical and commonly overlooked factors. It is essential that the selected sensor aligns with the fault modes of interest and is installed correctly. Doing this well requires both an understanding of the machine and condition monitoring principles.

• Understanding of monitored assets. Effective deployment requires knowledge of the specific asset class, whether pumps, motors, fans, or other equipment. Without this context, even well-installed systems may fail to deliver meaningful results.

• AI/ML claims and limitations. While AI (Artificial Intelligence) and ML (Machine Learning) technologies are powerful and continue to improve, they must be applied thoughtfully. Be cautious of claims such as “Our system, once trained, will fully automate your alarms” or “We train our models on sophisticated bearing test rigs in our lab.” Vendors should be able to clearly explain how their algorithms work, what data they rely on, and what level of user control or adjustment is available.

• Analysis expertise and services. Beyond the technology itself, consider whether the vendor provides access to experienced analysts or offers services to support ongoing condition monitoring. This may include data review, diagnostic insights, alarm management, and recommendations. Even with advanced software, human expertise often plays a critical role in ensuring accurate interpretation and long-term program success.

Note: Mounting on motor fins is possible with fin-compatible mounting hardware to ensure accurate measurements.

Technology

The importance of the technology stack will largely depend on your program requirements, particularly whether you intend to rely on external expertise and how critical system integration is within your organization. Key considerations may include:

• System architecture (full stack vs multi-vendor). A “full stack” offering typically includes sensors, firmware, and both front- and back-end software from a single vendor. Alternatively, some solutions combine sensors and software from different providers. While a multi-vendor approach may offer flexibility, it also introduces complexity, as sensor firmware must be tightly aligned with the software. Responsibility for updates, compatibility, and troubleshooting can become more challenging when multiple vendors are involved.

• Sensor performance and specifications. This includes frequency response, environmental ratings, communication protocols, and physical footprint. There is a significant amount of misinformation in the market regarding specifications, so it is important to review these carefully and, where possible, request testing certificates to validate performance claims.

It is also important to understand how certain measurements are derived. For example, some vendors claim to measure shaft speed, when in reality it is calculated from vibration spectrum data rather than directly measured. Without a dedicated sensor such as a magnetometer, these calculated values may be less reliable, particularly in variable speed or transient operating conditions.

This highlights a broader challenge in the market, where capabilities may be presented in a way that overstates actual functionality. For this reason, evaluations should involve individuals who understand the underlying measurement techniques and can distinguish between directly measured parameters and those that are inferred.

• Data collection strategy. How data is collected and scheduled is a key differentiator. Some systems rely on fixed intervals, while others allow user-defined schedules or event-triggered measurements. Greater flexibility can help optimize battery life, data relevance, and responsiveness to changing operating conditions.

• Software capabilities and user flexibility. While most platforms provide dashboard-style visualizations, they differ significantly in the depth of tools available to users. Consider the availability of advanced analysis tools, charting options, and the ability to modify alarms, diagnostic rules, and learning models. In addition, evaluate how easily data can be shared with other systems (e.g., CMMS, historians, or enterprise platforms) and whether it can be accessed and analyzed by external tools or third-party resources. For advanced users, the ability to incorporate supervised learning and adjust system behavior is particularly important.

• Dashboard customization. The ability to tailor dashboards to different user groups (e.g., maintenance, reliability, operations, management) ensures that each stakeholder can quickly access the information most relevant to their role.

• Deployment model. Solutions may be cloud-native, deployed in a private cloud, or installed on-premise. The appropriate choice will depend on IT policies, cybersecurity requirements, and internal infrastructure.

• Commercial model (CAPEX vs OPEX). Some vendors offer only subscription-based (OPEX) models, while others allow for capital purchase (CAPEX) or hybrid approaches. This can significantly influence procurement strategy and long-term cost structure.

• Flexibility of analysis approach. Consider whether the system is designed as a full-service solution, where the vendor provides ongoing analysis, or as a platform that enables internal teams to perform their own diagnostics. Some solutions support both approaches.

• Range of asset types supported. Evaluate whether the platform is designed for a specific class of assets (e.g., rotating equipment only) or can support a broader range of equipment types and monitoring techniques, potentially reducing the need for multiple systems.

• Scalability. As programs expand from pilot phases to hundreds or thousands of assets, the system must be able to scale effectively. This includes not only data handling and system performance, but also whether AI/ML tools and workflows can support large-scale deployments without overwhelming users with data or alarms.

Summary

In conclusion, a structured evaluation process will lead to a more consistent and successful outcome when comparing multiple vendors. A quantitative approach helps ensure a balanced and objective assessment, increasing the likelihood that the most critical success factors are properly considered.

Just as importantly, the evaluation should involve subject matter experts who understand the underlying technologies and measurement techniques. This helps ensure that vendor claims are properly validated and that key differences between solutions are fully understood.

We encourage you to use the downloadable Evaluation Spreadsheet Tool as a starting point and adapt it to fit your specific requirements.

About the Author

Dan Bradley is a Mechanical Engineer with 40+ years of experience in the Reliability and Condition Monitoring industry. This includes consulting, instrumentation & software design along with implementation of programs around the world in a variety of industries. He has held previous positions that include CEO of Petasense, Inc., Global Director of SKF AB Reliability Systems, and he started his career with IRD Mechanalysis, Inc.

Thanks for subscribing - stay tuned for our next newsletter

Thanks for subscribing - stay tuned for our next newsletter